How a Karpathy gist, an Obsidian vault, and a small bootstrapped team convinced me that AI knowledge needs somewhere to live.

May 3, 2026

There's a problem with working in AI-assisted teams that nobody really talks about yet, probably because we're all still figuring out what it even means to work in an AI-assisted team. The problem is that every new conversation starts from zero. You open a chat, you give it some context, you get something useful done, and then that conversation closes. The next time you or a colleague opens a new chat and needs to do something related, you start from zero again. Sometimes you remember to copy something across. Mostly you don't, or you can't find it, or you half-remember that someone solved this already but you have no idea where.

It's the same problem that made Slack feel liberating for about two years and then quietly became one of the most reliable ways to lose information in the history of the written word. Knowledge shared in Slack is not knowledge stored. It's knowledge broadcast. You're sending something into a stream that flows on without it, and if nobody saves it somewhere else, it's effectively gone.

I'd been thinking about this for a while. And then Andrej Karpathy published something that gave me the vocabulary for what I'd been circling around.

In April 2026, Karpathy posted a gist he called llm-wiki. The idea was deceptively simple: instead of using an LLM as a retrieval system over raw documents, you use it to incrementally build and maintain a persistent wiki. When you add a new source, the LLM doesn't just index it for later. It reads it, extracts what matters, and integrates it into the existing structure, updating pages, flagging contradictions, strengthening the synthesis. The knowledge is compiled once and then kept current, rather than re-derived from scratch on every query.

The phrase that stuck with me was "the wiki is a persistent, compounding artifact." The cross-references are already there. The synthesis already reflects everything you've put in. He described having the LLM open on one side and Obsidian on the other, the LLM making edits while he browsed the results in real time. The LLM as programmer, Obsidian as IDE.

I'd already built something close to this for myself without really naming it. Reading the gist was one of those moments where someone else's framing suddenly clarifies something you've been doing instinctively.

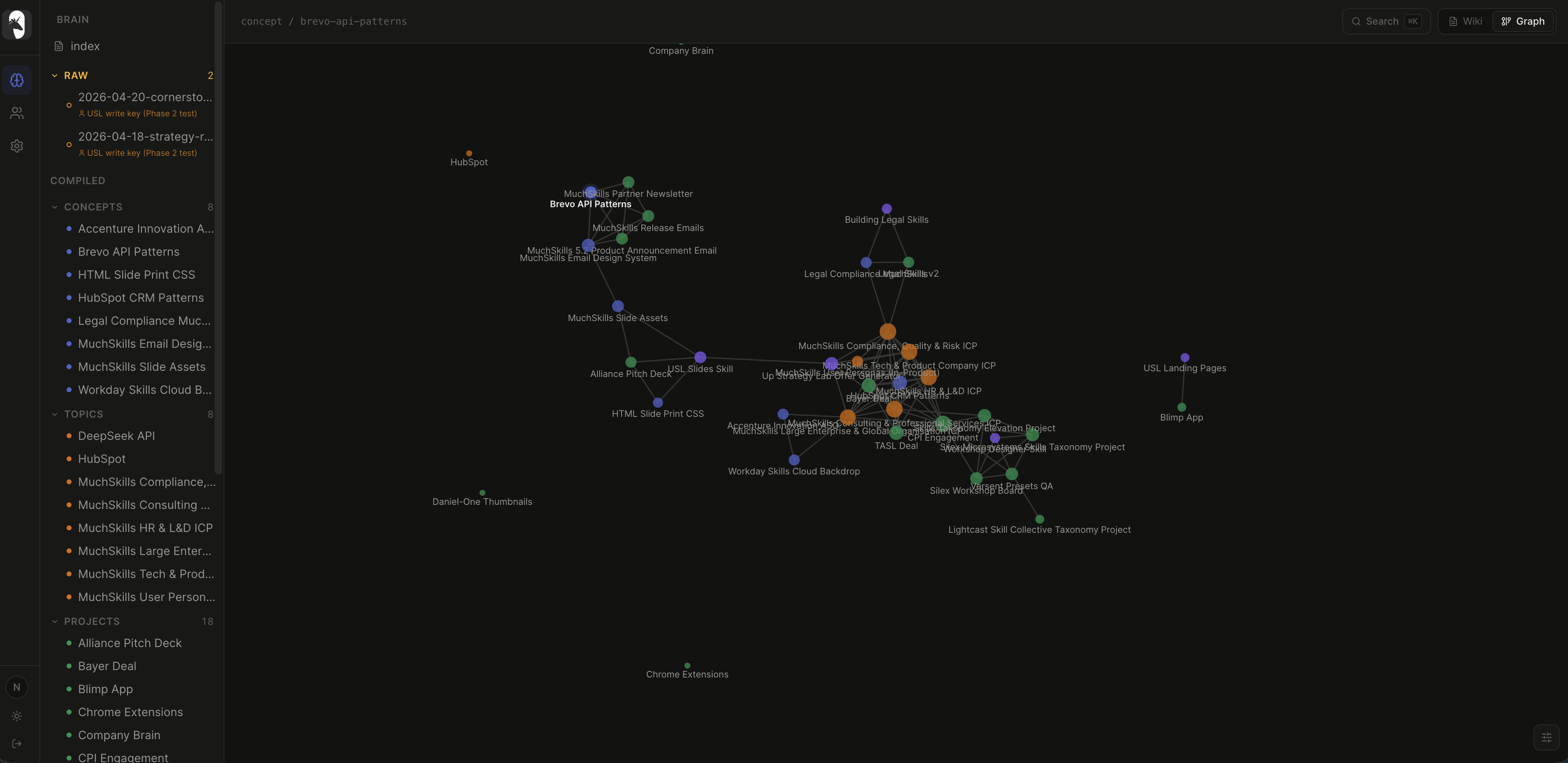

A few months before, I'd set up a personal knowledge workflow built around Obsidian and Claude Code. The core mechanic was simple: whenever I was doing any meaningful work in Claude Code, relevant knowledge from that session would automatically get pushed to a raw folder in my Obsidian vault. Then, on a regular cadence, that raw material would get compiled, crosslinked, organised into a proper wiki structure. The graph would stay alive. Pages would update. Things would start connecting to each other.

In practice I barely had to think about it. My main job was to occasionally drop in articles or notes that hadn't come through Claude Code, things I'd read or thought about that I wanted in the system. Everything else was handled. The brain kept growing, kept organising, kept indexing itself.

What I noticed over time is that this changed something subtle about how I worked. I stopped worrying about whether I'd remember things. Not because my memory got better, but because I trusted the system. There's a particular kind of cognitive overhead that comes from half-remembering something useful and not being sure you'll be able to find it again. This removed that.

The anecdote that I keep coming back to: at some point I knew I had saved a list of image assets somewhere for MuchSkills presentation slides, a carefully organised set of things I'd want to use in decks. I couldn't remember which project it was in, couldn't remember the exact context, couldn't remember enough to know what to search for. Normally this kind of thing turns into a twenty minute exercise in opening old chats and scrolling. Instead I just asked Claude to check my Second Brain with an extremely vague description of what I was looking for. It found it immediately. A nicely organised list, exactly what I needed, surfaced in a few seconds from a prompt that barely described the thing.

That experience made something concrete for me: the value wasn't just in storing information. It was in being able to retrieve things you couldn't quite name.

If this worked for me personally, it raised an obvious question. We're a small team. Everyone at Up Strategy Lab and MuchSkills is actively using AI as part of their actual workflow now, not as an experiment, not occasionally, but constantly. Daniel is in it for sales and GTM. There's knowledge being generated in those conversations that is genuinely useful to other people on the team. Integrations figured out. Bugs solved. Positioning language that actually landed. Client context that took time to build up.

And what happened to all of it? It lived in individual chat histories that nobody else could access, or it got shared in Slack where it dissolved into the timeline, or it stayed in someone's head.

I'd already noticed we were sharing .skills files over Slack as a workaround. These were context files we'd built up for specific domains, things like our brand voice, ICP personas, service offer structures. The idea was good. The execution was a mess. You never knew which version was current. Someone would update their local copy and forget to reshare it. The file would drift. Searching Slack for "latest skills file" is not a workflow.

The brainwave was this: if the Second Brain was my personal knowledge anchor, the same principle could work as a shared anchor for the whole team. Not a shared folder. Not a document library. A living wiki that any team member's AI instance could query at the start of a conversation, and any team member's AI instance could push new knowledge into at the end of one. Better that the AI compiles and organises, rather than us trying to do it manually. Better that the index stays current automatically. Better that when someone has already fought through some annoying integration problem, that solution doesn't disappear into a private chat history.

I built Skills OS as an MCP server, running on Railway, with a JSON-RPC 2.0 interface. The technical implementation is less interesting than the design principle. The point was that it had to be frictionless enough that people would actually use it.

The knowledge lives as a structured wiki, organised by topic and updated over time. When you do something useful in a conversation, you tell Claude Chat (or Cowork, or Claude Code) to compile and push the relevant knowledge to Skills OS. Claude Code does this automatically. For everything else it's a light, intentional step. The server handles slug-based merging, so new knowledge gets integrated into existing entries rather than creating a new duplicate page every time.

The setup for the team was minimal. Install the MCP server, point it at the endpoint. After that, when you're working on something and you know you've figured something out worth keeping, you just say so. The brain grows from there.

I introduced it to the team more or less by showing them what was already in it from my own use, which I think was more convincing than any explanation I could have given. Here is a thing I needed six weeks ago that I can still find in two seconds. That tends to land.

Today Skills OS is a knowledge wiki of the work-related conversations across our team that have been worth preserving. Not everything. The system is designed to keep the useful bits, not to archive everything indiscriminately. The LLM decides what's worth compiling into a page and what can be discarded.

In practice it means things like: if one person has already worked out the correct way to handle a Brevo automation sequence, that knowledge lives in Skills OS. The next time anyone needs to touch Brevo, their AI instance already has enough context to start from an informed position rather than from scratch. The troubleshooting that happened once doesn't need to happen again. The positioning language someone spent an afternoon getting right doesn't need to be rediscovered the next time someone needs to write for that audience.

The comparison I keep reaching for is the difference between a team that takes notes and a team that doesn't. It's not that the note-taking team is smarter or more capable. It's that they compound. Each thing they learn is available to the next thing they do. Teams that don't take notes don't compound, they just keep being capable of roughly the same things. The version of this that runs on AI workflows is Skills OS.

What the llm-wiki framing gave me was a way to articulate why this is different from RAG, different from a shared Google Drive, different from a Notion wiki that someone had to write and that is probably already out of date. The key difference is who does the maintenance. In most systems, the human has to actively update the knowledge base, which means it only gets updated when someone has the time and discipline to do it. Which means it doesn't really get updated.

The compounding artifact model inverts this. The LLM does the grunt work of summarising, cross-referencing, filing, flagging contradictions. The humans just do the work they were already doing and occasionally tell the system what's worth keeping. The wiki maintains itself. The graph stays alive because the graph is being written by the same thing that generates the knowledge in the first place.

The Second Brain started as a personal experiment and became something I relied on. Skills OS started as a team experiment and is becoming something the team relies on. I think this is genuinely a different way of working with AI than most teams are doing. Not because it requires better tools or smarter prompting, but because it requires treating your AI-generated knowledge as something worth keeping, and building a system that makes keeping it easy.

The brain doesn't forget because the brain is doing the remembering for us. That turns out to be the important part.

Noel Braganza is a designer and founder based in Gothenburg, Sweden. He co-founded MuchSkills, a bootstrapped SaaS platform for skills intelligence, and Up Strategy Lab, a strategy and design consultancy. His background is in Interaction Design, with research experience at the MIT Design Lab.

Most of his work starts from the same instinct: that the inherited assumptions underneath a problem are usually more interesting than the problem itself. That's true of how organisations think about skills, how founders talk about growth, and how people are starting to make sense of AI.

MuchSkills is profitable and growing without external funding. That shapes how he thinks about building, what's worth optimising for, and what isn't.

He writes occasionally at noelbraganza.com.